Moving to the cloud can feel like an impending zombie apocalypse as you wonder who could gain access to your assets and launch attacks against your company once you migrate. Going into the unknown can be unnerving for many companies considering moving to the cloud. However, with the rapid growth and popularity of the cloud today, organizations are wondering not if they’ll move to the cloud, but when—and when they do, how they can take proactive measures to reduce risk exposure and stay safe.

In a recent webcast, Rapid7’s Head of Labs, Derek Abdine, shared the top cloud configuration mistakes organizations make and four rules to implement so you can migrate securely to the cloud.

Cloud security rule No. 1: Controlling access

Understanding who is gaining access to resources in your cloud environment is a big unknown for many organizations. The best way to prevent this from happening is to use temporary security credentials.

With just a simple search on GitHub, you can usually find zombie credentials or access keys unintentionally embedded within configuration files. This is not a fault of GitHub, but rather application developers who toss access keys into these files and accidentally commit them. This can happen even on content that gets indexed by Google. However, regardless of where they show up, you never want static access keys out in public.

One of the best ways to protect your account is to avoid using statically defined keys (especially for the root user) when you can. Instead, using temporary credentials or IAM roles can help to manage cloud access. Developers can even interact through tools like Rapid7’s open source awsaml, which allows you to integrate with a provider like Okta to get temporary tokens from which to run their tools instead. If you do need to use static keys, putting the right rules and policies in place will be key. Additionally, specifically for git repositories, you can prevent accidental commits of secrets using projects like AWS Labs’ git-secrets project.

Cloud security rule No. 2: Limiting exposure

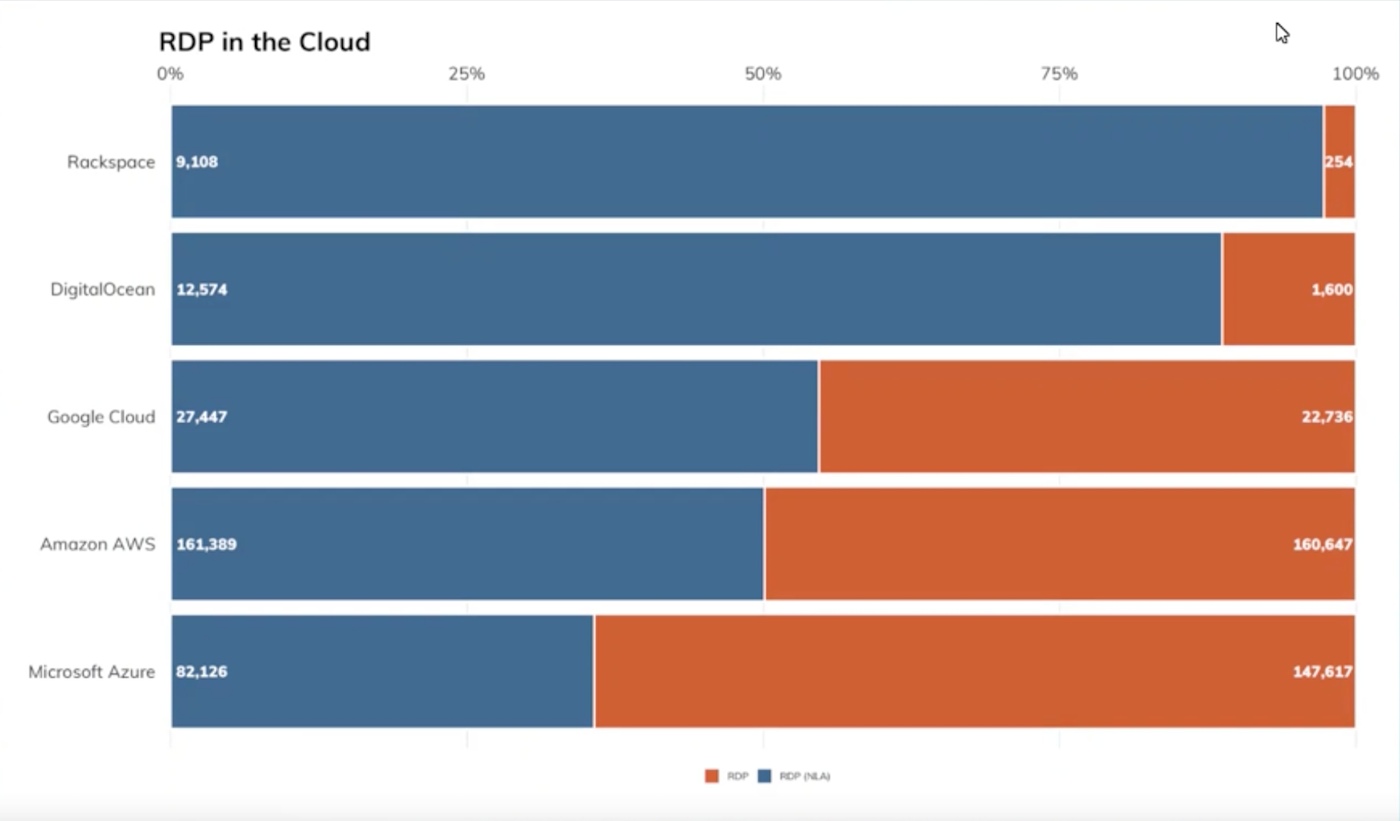

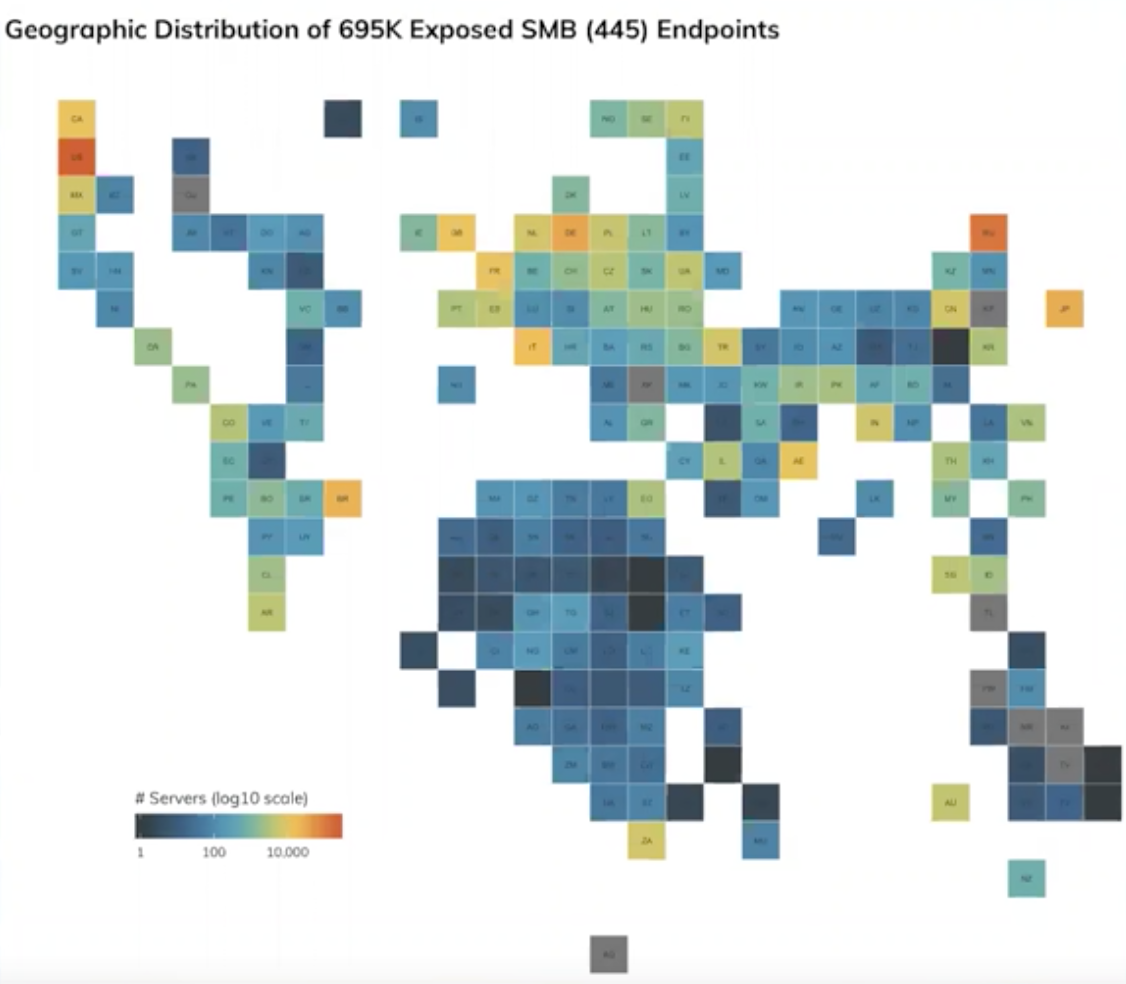

The smaller your footprint, the harder you make it for attackers to gain access to your cloud environment. Simply put, don’t expose anything you don’t need to. The first step in doing this is to reduce your attack surface by utilizing private networking of cloud infrastructure (VPCs) instead of using Remote Desktop Protocol (RDP) or Server Message Block (SMB), which allow servers to become publicly exposed.

You can also leverage firewall rules or security groups so that if an administrator needs to access MySQL, for example, they’d have to shell or VPN into the cloud environment to gain access, which significantly limits exposure to malicious actors.

It’s also important to always monitor for exposed assets. Shadow IT is a real issue that often gets overlooked, and because cloud environments are highly dynamic, you need visibility into what you have out there and what is exposed. InsightVM’s Cloud Configuration Assessment can help you see which assets have been made public due to misconfiguration, while Rapid7’s Open Data is a free resource for global public internet exposure data.

Cloud security rule No. 3: Control your names

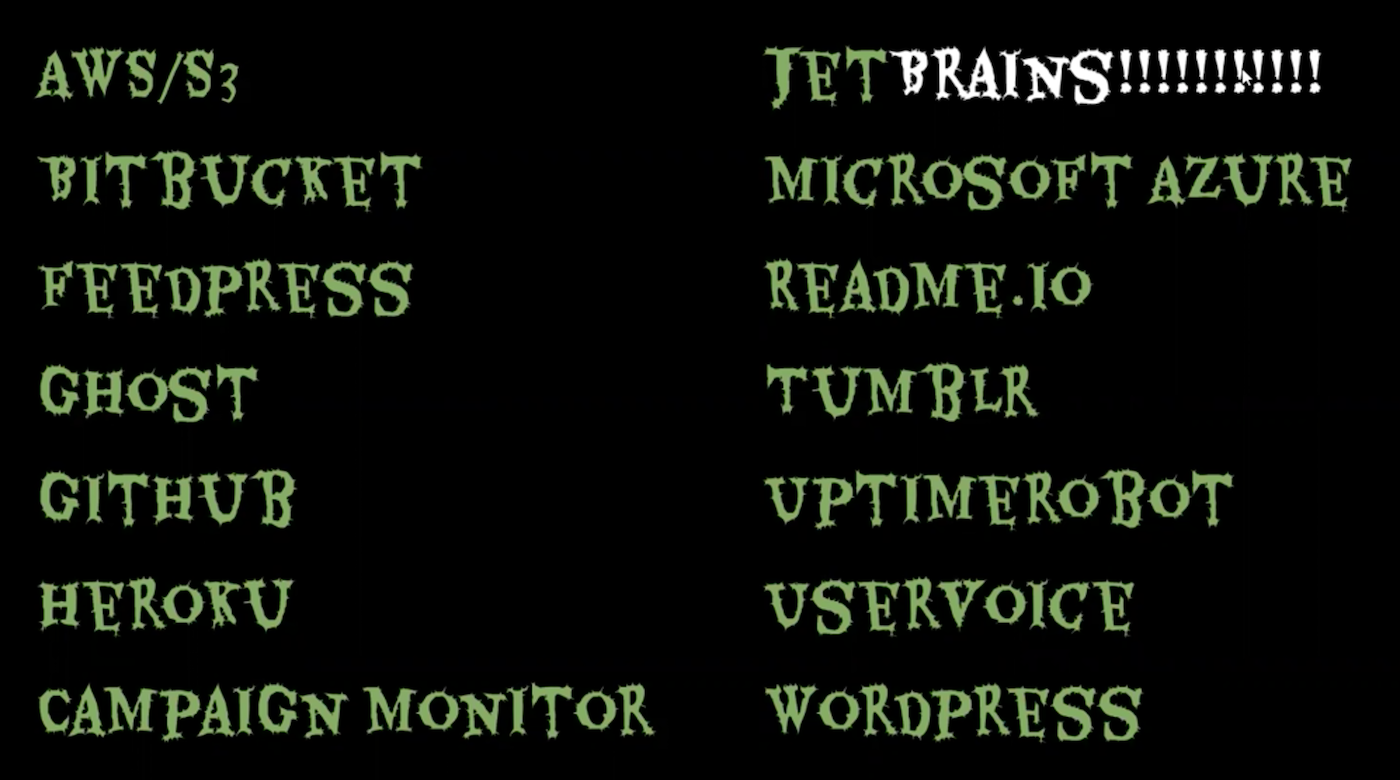

Pop quiz: What do the below services all have in common?

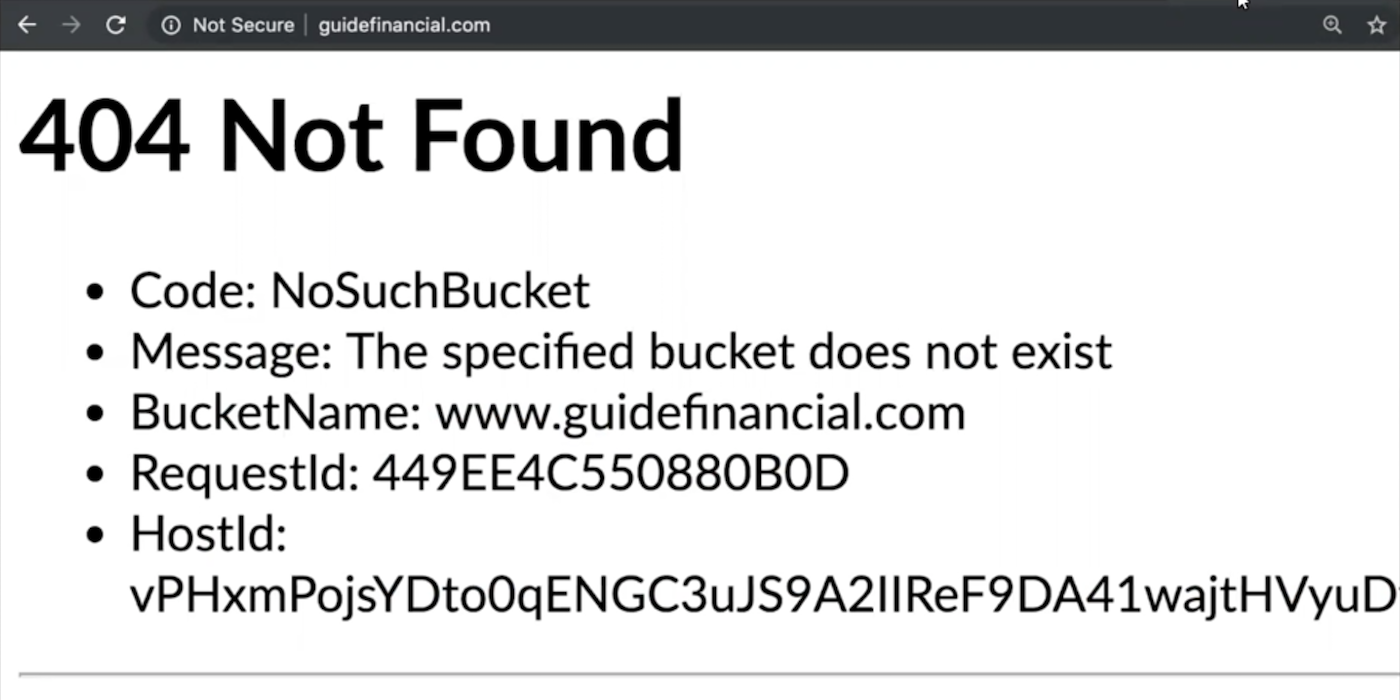

Answer: They’re all potential vectors for domain or subdomain takeovers. Case in point: Let’s say a small organization registers a domain and points its IP address to an AWS S3 bucket. A few years later, the company goes out of business, but the business name still exists. If you went to their domain, you’d see this message:

This is an invitation for someone to take advantage of the domain and its contents. All they’d need to do is register the bucket on Amazon using its name and launch the bucket, and any content already on the domain they would instantly own. This is a classic example of subdomain takeover requiring only a stale DNS record that an administrator forgot about.

To avoid this, release, sell, or decommission any names your business no longer uses. For those that are still in use, be sure to monitor them in your DNS and don’t utilize services that are prone to takeover vectors. Cloud infrastructure services like CNAME, A, and AAAA can help handle dynamic records to keep you safe.

Cloud security rule No. 4: No public buckets

One incredibly common source of data breaches is leaving a data bucket open for an adversary to access. These breaches are exceedingly frustrating to witness, not just because of the data that gets exposed, but because they can be so easily avoided.

Cloud providers today can provide you with visibility into which buckets are public and which ones are not, as well as global policy settings for public buckets. These policies alone have significantly reduced the amount of data that can be stolen in a breach.

Plus, the advent of serverless technology to automate which servers are public and providing reports to administrators to identify and close buckets that shouldn’t be public has enabled companies to gain complete visibility and take action before anyone else can.

Cloud security rule No. 5: Automate dynamism in the cloud

When you think of dynamism, you may think of things like IP addresses, host names, and other infrastructure that changes often in the cloud. Because attackers are prone to taking advantage of the dynamic nature of the cloud, we need the ability to stay ahead of them. How? By utilizing security orchestration and automation (SOAR).

It can take under 60 seconds for unwanted traffic to come when you bring something online. And, if you let go of an AWS resource, someone can obtain that IP address in under 60 seconds. Information about your app can leak easily if you’re not orchestrating and automating dynamism around IP addresses and names in the cloud with a SOAR solution like InsightConnect.

Top takeaways

Derek covered an incredible amount of information in a short amount of time in this webcast, so here are his top takeaways:

- Build resilient apps. Apps today receive a lot of strange traffic, so building in visibility and security is the key to staying on top of that.

- Use security groups wisely. Don’t use security groups across everything, but instead think about how they can enable business while only locking down specific services to reduce the most important exposure.

- Consider using Lambdas. You certainly don’t want zombie Lambdas connecting to resources you don’t maintain or monitor, but you do want good Lambdas that allow you to automate issues around dynamism and that help you audit and address problems fast, which is where security orchestration and automation comes in.

Rapid7’s internet scanning project, Project Sonar, and global honeynet, Project Lorelei, are two key ways you can reduce your risk exposure while moving securely to the cloud. With over 60 billion DNS lookups every two weeks and over 200 monthly scans across 50 ports, Sonar’s active internet telemetry collector can be used for free to keep you in the know. And, by tracking attacker campaigns and techniques across 250+ low-to-medium interaction honeypots, Heisenberg’s passive Internet telemetry provides essential data to regional CERTs.

Begin implementing the rules in this post, and zombie activity will soon be a threat of the past.

NEVER MISS AN EMERGING THREAT

Be the first to learn about the latest vulnerabilities and cybersecurity news.

Subscribe NowArticle Tags

Related blog posts

Exposure Management

Operationalizing CTEM Faster: Build Surface Command Dashboards in Minutes

Ed Montgomery

Industry Trends

Your Cloud Detection Strategy in 2026: What to Expect at the Global Cybersecurity Summit

Emma Burdett

Cloud and Devops Security

Rapid7 Completes BSI C5 Type 2 Examination: Stronger Cloud Security for DACH Organizations

Georgeta Toth

Cloud and Devops Security

Building the Future of Cloud Security: Rapid7 Recognized in Cloud Native Application Protection, Q1 2026

Rapid7