This post originally appeared on the Smart Bear blog. To read more content like this, subscribe to the Software Quality Matters Blog.

Frustrations with lack of tool unification might just lead to revolution in the APM space…

Application Performance Management (APM) is a broad concept, and many technologies fall under its umbrella. With renewed focus on performance, APM is a very hot topic and a high growth area for products and services, which has given way to a gold rush of new tech vendors entering the space. With so many players now in the game, the APM market is much more fragmented than it used to be. While the spike in innovation and growing number of APM tools available on the market today is incredibly exciting, the problem is that we suddenly find ourselves using a different tool for each separate piece of the APM puzzle, and none of these tools and technologies are designed to work with one another. There is no Swiss army knife for APM.

Are your apples really apples?

There are many components involved in APM, all of which are measured in different ways by different metrics. One tool may take a look at one part of the picture, while another tool is also looking at the picture from a different aspect using a different methodology and giving a different result. This kind of approach generally results in differing metrics appearing in any comparison between the two tools’ output. It’s usually not clear in a multi-tool environment if you are truly comparing apples to apples, and that can skew the interpretation of the resulting output significantly.

Let’s say that you use synthetic event generating tools to check availability. Even if that tool shows good availability, another tool located within the firewall may test for availability based on user location and find blockages that are not triggered by the synthetic event tool. Where the tools are located within the network topology is important, along with all of these other considerations.

The dirty secret about using individual APM tools that the sellers don’t mention is how the tools that they sell you will not talk to another tool in use made by another vendor. It’s by design, not accident. The vendor wants you to use their tool, not someone else’s. They hide results in their own separate data silo and won’t communicate with other processes that may be happening at the same time. But this kind of thinking ensures that one will miss the synergistic effect that can be had when there are two tools looking at the same situation. This synergy is hard to achieve if one has to manually integrate the output of two (or more) tools that are looking at some problem at the same time. It makes for a lousy user experience (UX), and a UX that not one of the many different departments affected by APM (ops, developers, testers, end-users) likes very much.

The practice of using point solutions from a number of different vendors that can’t intercommunicate also stops one tool from being able to set a boundary condition for another. As an example, let’s say that your deep packet inspection (DPI) tool is sniffing the wires to see why a node is jamming up. Doing DPI is hard in the best of cases (and computation intensive), much like getting just a syslog dump and expecting to find something that will help rectify a problem. Let’s say you use some synthetic APM tool to generate what you think will cause a fault. If the event tool can’t tell the DPI tool to concentrate on packets that are generated only after the synthetic events are generated, there will be a mass of unneeded packet inspections to wade through in the analysis.

Multiple tools can result in multiple problems

Further complicating things, each of the multiple tools being used in complex situation could be the wrong tool for a specific situation. If a problem primarily arises from the network environment then doing a DPI on the wire relative to one location in the network may not even show it. There is just no guarantee that the specific tools that are chosen will help to show an answer to a problem, regardless of what the problem is. This means the user wants the widest possible field of vision around an event, so they can get actionable intelligence from the analysis. This speaks to the necessity of tools that talk to one another.

Users can’t be poked

APM users themselves have also become disenchanted with the complexity and lack of integration of tools that have evolved. Where at first new features seemed like a good idea, and in fact people needed more features to solve their problems… at a certain point , like the “feature-itis” that ate WORD over the years the tools moved from solving one problem well to being so complicated the user has no idea how to solve the original problem easily. The added features may seem useful and all, but finding and understanding the new stuff inside a program that has been in use for a time requires the user to retrain them selves with every new release. Users will only put up with so much of this kind of aggravation.

And don’t think that just putting a better looking dashboard on top of cruddy unintegrated tools will help the UX situation either. It ends up being a prettier but still unsatisfying experience for the user.

The invisible stuff counts too

Successful APM is not just about the right set of tools or the correct integration. APM needs to work in tandem with a culture that supports it. A performance team with stakeholders from several departments needs to work together so that there are agreed upon performance goals and measurements. The perspective of each functional area needs to be taken into account to obtain actionable results from the activities around performance management. Performance is improved when it is built into the application lifecycle.

In addition to a culture of collaboration for performance, there are some corollary activities that are also important to APM.

For example, it is widely agreed that the first part of the APM cycle is to find out if the app can run in the deployed environment it was designed to work in. That means you had better have a real emulation of the network available for such a test. Your network emulator has to be maintained and updated to reflect this week’s changes to it. Yes, there are software agents to do this for you; but they have to be managed and checked.

The structure of how the team works together also greatly impacts UX. If there aren’t common goals as well as communication between the groups of stakeholders, things can’t possibly get done in an efficient manner. Now, DevOps embraces such communication, and may well be the structure a technical team can adopt. But whatever the model used, communication between all of the team is vital to success. That means that the business owner has to be part of the culture and part of the team. Performance must be everyone’s concern as it affects the enterprise as a whole.

APM is bigger than IT

APM data can be more than just fodder for fixing network-based UX problems. Operational intelligence tools can use the APM feed to guide business processes that affect the entire enterprise. To improve, an enterprise needs to be aware of where those improvements should be directed. Taking a business’s pulse via APM is a way to monitor functions that are critical to getting things done.

So, understanding how important APM can be to an enterprise makes having a good UX not just something optional, but operational. An enterprise that is focused on performance will use APM tools for a variety of purposes. Those tools, working in concert, must be able to undertake analysis that will show what the needed actions should be across the organization. Otherwise, the users will not have a very happy experience.

An APM solution divided cannot stand

I am surely not the first to take notice of this swelling need for tool unification in the APM space. As I mentioned above, the industry at large has grown disenchanted with the complexities and disconnections of solutions in the application performance market today. People are realizing that the problem will continue to get worse until it is solved, and the market won’t stand for it much longer. Let’s not forget, APM users are highly-skilled, highly-trained experts that make living preventing end-users from becoming dissatisfied with technology. Does anyone really think these folks will put up with technology that they themselves are dissatisfied with?

One would imagine that vendors looking to survive the APM “gold rush” and emerge as market leaders in 2014 and beyond are the ones already fast at work to answer the demand for a unified APM solution.

Article Tags

Related blog posts

Products and Tools

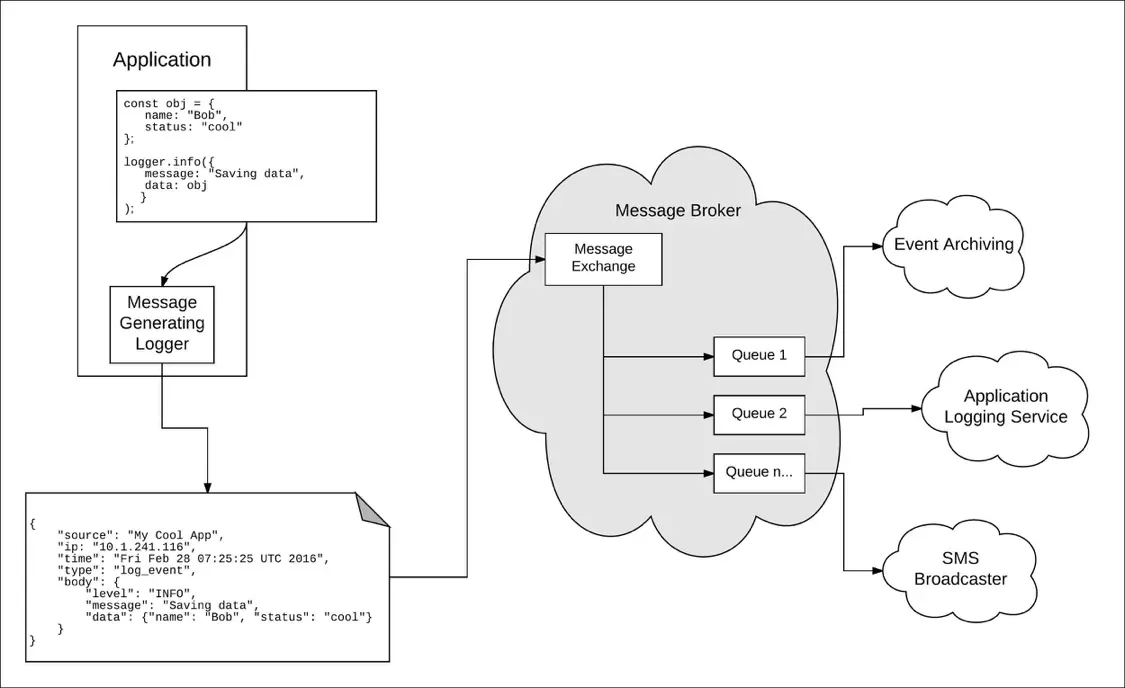

Taking a Message-Based Approach to Logging

Robert Reselman

Detection and Response

6 Best Practices for Effective IT Troubleshooting

Robert Reselman

Security Operations

3 Steps to Building an Effective Log Management Policy

Robert Reselman

Security Operations

3 Core Responsibilities for the Modern IT Operations Manager

Robert Reselman