When we think of traditional development and production operations, we often put everything into a linear software delivery pipeline that starts with a development backlog, and ends with production monitoring. We slot tools at each stage, and for the most part, keep everything segmented. Log analysis is a common tool in that chain but where does it fit? At the end? I think not.

Log analysis can be used throughout your entire software delivery pipeline.

The linear pipeline is a symptom of the old days of waterfall application development. But it is also a symptom of siloed organizations and poor communication in which individuals stick to their function, and do not go outside of it.

However, in DevOps the barriers are broken down for a reason. Not so we can “all just get along,”… it is not that touchy feely. It has the tactical benefit of allowing a pipeline that cannot be chunked to function smoothly. If open communication does not exist, then it is not DevOps. Plain and simple.

Very often it is IT ops that sets up and utilizes, at least initially, the log analysis platform. This makes sense because the increased rate of releases makes any manually event pulling nearly impossible. But soon after, most development shops realize there is a lot to gain for developers as well. Where they can access information about bugs, user access etc. But this is still a production logging use case.

Did you know it goes further than that? And, actually, log analysis should be used to log the entire software delivery pipeline. This means agents and API calls connected to all critical application functions, processes, and machines used for dev and continuous integration. And even the orchestration, and testing script runs.

There is one simple reason this should be the case: full visibility allows the correlation of stages of software deployment. This in turn allows an identification of what is going well, and what is not, in the pipeline itself. It also identifies the bottlenecks that are preventing even more frequent releases.

For example, QA. If a QA team is using automation, they likely are using Selenium. Selenium test runs, if you have ever tried it, are VERY slow. Which usually means that the QA team builds test cases, and puts them into code, but do not run the tests. They run them right before they leave for the day. Or there is an integration environment where they are run automatically upon each commit. No matter what, the machines that run the test are taxed. And nothing can move forward until the test runs are done. But what happens if a script fails? This is terrible news because it means a re-run. The cost of this process for deployments might not be clear if it is not observed. And this is where log analysis can play a very important role.

Log your test runs, including at minimum, the start, stop, script names, and failures. This allows IT to help optimize the test infrastructure as well. Why? Because it can be correlated with system performance data produced by the agents where the tests are run. Maybe there is some way to load balance, or increase performance because the disk IO is a hurdle. Then both QA and Ops are working together to increase the efficiency of the pipeline.

It is not just QA. It could be GitHub (failed commits), backlog systems (ebb and flow of volume), release management tools, CI infrastructure, testing environments, developer machines, etc. And none of these steps needs to live in an abyss. By correlating together with production their data provides a complete picture of the entire development environment.

But what is really cool is that a version currently in production can be the source of improvement for a future release in development right now. The big factor here is performance. Did a new release reduce performance? Well then maybe development should look at the new functionality and find out why. Performance impacts users, and future scaling questions.

You have the tool. And now you know that even production benefits from all the data that precedes it. As does the efficiency of your entire development shop.

Logging all stages in the software delivery pipeline converts a linear process into a complete cycle that DevOps demands from us.

And it shares the power and love that Ops has with robust log analysis.

Article Tags

Related blog posts

Products and Tools

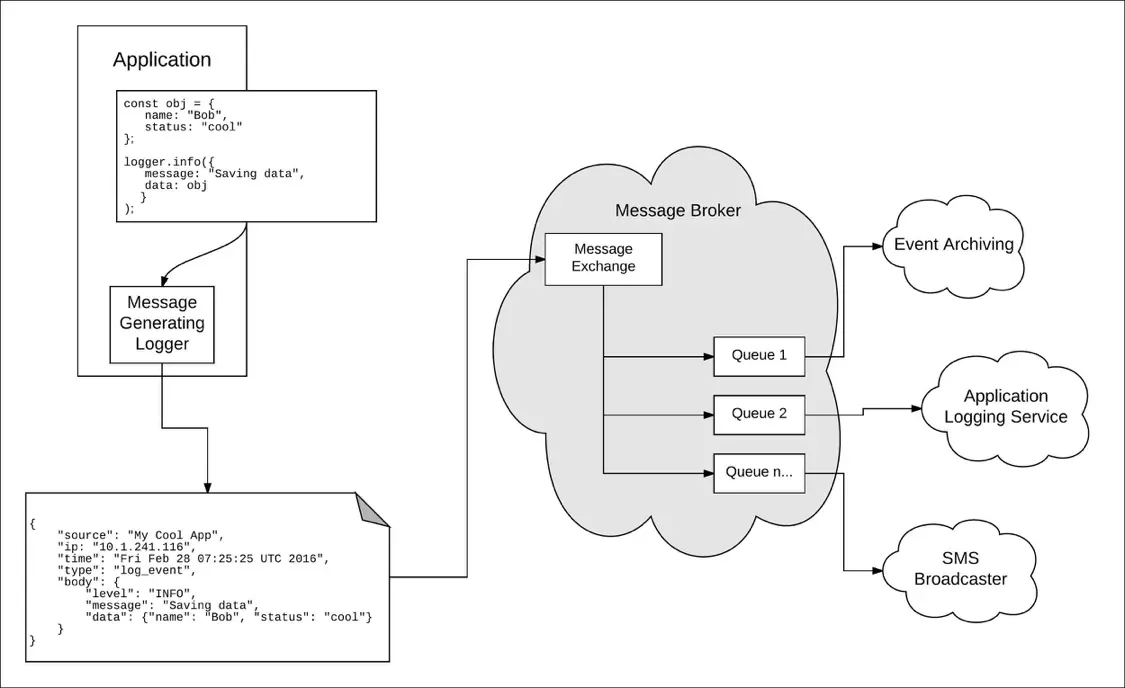

Taking a Message-Based Approach to Logging

Robert Reselman

Detection and Response

6 Best Practices for Effective IT Troubleshooting

Robert Reselman

Security Operations

3 Steps to Building an Effective Log Management Policy

Robert Reselman

Security Operations

3 Core Responsibilities for the Modern IT Operations Manager

Robert Reselman