Here at Logentries we are constantly adding to the options for analysing log generated data. The query language ‘LEQL’ has a number of statistical functions and a recent addition has been the new Standard Deviation calculation.

LEQL query example

where(image=debian) groupby(location) calculate(standarddeviation:usage)

An interesting point that relates to this new function is that at the heart of its implementation is the calculation of ‘variance’ of the data using a specific type of algorithm – namely an ‘online’ algorithm. Once the ‘variance’ is found then the Standard Deviation is the square root of this ‘variance’ value. The challenge is finding the ‘variance’ for a sequence of data points, where it is not known before hand how many data points there will be.

Online Algorithm

An ‘online’ algorithm is one that is designed to handle processing input data that arrives in a sequence, and not as a complete set. Taking log events as a perfect example, this type of data arrives sequentially one event after the other. An ‘online’ algorithm is designed to process each new piece of data or log event as it arrives to produce a final result. Also note that such algorithms are not designed with any assumptions about future data that may arrive, such as how many events or when. These are the unknowns the algorithm designer must take into account.

The opposite would be an ‘offline’ algorithm where the complete data set of interest is provided to the algorithm at the same time. Therefore the ‘offline’ algorithm will start out and finish with a known fixed number of data points.

On trying to establish the performance of either type, it is easier for ‘off-line’ algorithms as “for each sequence of requests, such an algorithm selects that sequence of actions which minimise the cost”. The difficulty with ‘on-line’ algorithms is that ‘whatever actions an on-line algorithm takes in response to an initial sequence of requests, there will be a sequence of further requests that makes the algorithm look foolish”. For further study on this, refer to [Karp 1992].

As also explained in [Karp 1992] one measurement of the cost incurred by an ‘on-line’ algorithm is it’s ‘competitive ratio’, being the “maximum, over all possible input sequences, of the ratio between the cost incurred by the on-line algorithm and the cost incurred by an optimal off-line algorithm. An optimal on-line algorithm is then one whose competitive ratio is least”. Of course finding this ratio is easier said then done, and also note that not all ‘online’ algorithms have matching ‘offline’ equivalents.

B. P. Welford

The study of such problems that gave rise to such algorithms started long before the Internet came into being. It is fascinating to note the dates of when such work was done to generate the algorithms that are so critical to computing, telecommunications and the Internet today. With regard to the ‘online’ variance algorithm, this arose from the work of Scientist B. P. Welford who published a paper in 1962 on “a Method for Calculating Corrected Sums of Squares and Products” with an offered solution where values are used only once and need not be stored.

Standard Deviation

The Standard Deviation is a measurement of the dispersion of a set of data points. A low value indicates that the set of data points tend to be close to the mean (the expected value). In simple terms the Standard Deviation of a set of data points is the square root of variance. Which then raises the question of how to find the variance on a sequence of data where the number of data points and their values is unknown. This is where the ‘online’ variance work done by B. P. Welford is relevant. There are two ways of finding the variance depending on if calculating an estimate of the Standard Deviation for a given population from a ‘sample’ data set or calculating using the complete ‘population’ data set. The code example below shows methods for both ways.

The following code example is that of an implementation in Java of a class called ‘StandardDeviation’ in which an ‘online’ algorithm is used as the basis of finding the Standard Deviation for a sequence of individual data points. It is based on an implementation example done by Sedgewick and Wayne. There is also a JUnit Test Class with two test methods – each of which use the same sequence of numbers as data – but where one version tests the result for the ‘sample’ standard deviation, and the other the ‘population’ standard deviation.

Java Class with Standard Deviation (on-line algorithm implementation)

public class StandardDeviation {

private static int count = 0;

private static double mean = 0.0;

private static double sum = 0.0;

public static void setValues(double data){

++count;

double delta = mean + (data - mean) / count;

sum += (data - mean) * (data - delta);

mean = delta;

}

private static Double getVariancePopulation(){

// for 'population' standard deviation calculation

return sum / (count);

}

private static Double getVarianceSample(){

// for 'sample' standard deviation calculation

return sum / (count - 1);

}

public static Double getSDPopulation(){

return count > 0 ? Math.sqrt(getVariancePopulation()) : 0.0;

}

public static Double getSDSample(){

return count > 0 ? Math.sqrt(getVarianceSample()) : 0.0;

}

}In the class above the setValues method will accumulate the data as it is fed each value from a sequence, one at a time. The simple, yet significant difference in calculation between ‘sample’ or ‘population’ can be seen in the two methods getVarianceSample() and getVariancePopulation(). Once the variance is found then the Standard Deviation is the square root of this variance.

JUnit Class with Standard Deviation Test Methods

import org.junit.Test;

import java.util.Arrays;

import java.util.Iterator;

import java.util.List;

import static org.junit.Assert.assertEquals;

public class TestStandardDeviation {

private static final double DELTA = 1e-15;

@Test

public void testSDSample(){

// [1]

StandardDeviation sd = new StandardDeviation();

// [2]

List data = Arrays.asList(50.0, 45.0, 55.0, 58.0, 43.0, 49.0, 50.0);

// [3]

Iterator it = data.iterator();

while(it.hasNext()){

sd.setValues(it.next());

}

// [4]

assertEquals(5.228129047119374, sd.getSDSample(), DELTA);

}

@Test

public void testSDPopulation(){

// [1]

StandardDeviation sd = new StandardDeviation();

// [2]

List data = Arrays.asList(50.0, 45.0, 55.0, 58.0, 43.0, 49.0, 50.0);

// [3]

Iterator it = data.iterator();

while(it.hasNext()){

sd.setValues(it.next());

}

// [4]

assertEquals(4.840306956027833, sd.getSDPopulation(), DELTA);

}

}In the JUnit test class above are 2 almost identical test methods that exercise the Standard Deviation class. Steps [1] to [3] in both methods are identical – [1] there is a new object created, [2] a list of numbers is created, with identical values for both test methods and [3] the list (sequence) of numbers is feed one number at a time to the 'sd.setValues()' method. The difference between the methods is seen in the [4] assertEquals – in one method we get the Standard Deviation using the ‘sample’ variance calculation while in the other method we use the ‘population’ calculation. As can be seen the resulting values are very different.

References

Note that Logentries does not take any responsibility for the content of external websites.

- Video: Logentries Query Language (LEQL)

- Wikipedia: Standard Deviation

- Wikipedia: Variance

- Wikipedia: Online Algorithm

- JUnit

- College site: Algorithms, 4th Ed. by Sedgewick and Wayne

- Citation: Welford, B.P., 1962. Note on a method for calculating corrected sums of squares and products. Technometrics, 4(3), pp.419-420.

- Citation: Karp, R.M., 1992, August. On-line algorithms versus off-line algorithms: How much is it worth to know the future?.

In IFIP Congress (1) (Vol. 12, pp. 416-429).

Use the LEQL analytic function Standard Deviation to examine the distribution of data points in your machine data. Try Logentries today with a free 30 day trial, get started.

Article Tags

Related blog posts

Products and Tools

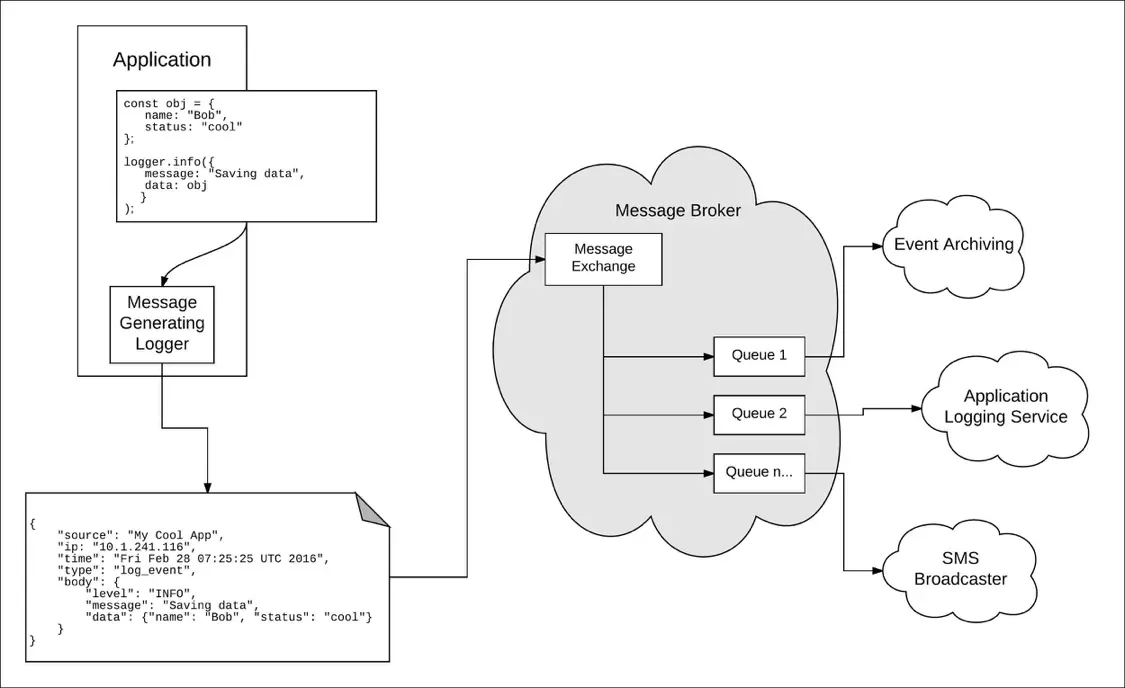

Taking a Message-Based Approach to Logging

Robert Reselman

Detection and Response

6 Best Practices for Effective IT Troubleshooting

Robert Reselman

Security Operations

3 Steps to Building an Effective Log Management Policy

Robert Reselman

Security Operations

3 Core Responsibilities for the Modern IT Operations Manager

Robert Reselman