Introduction

The IT and DevOps world has come a long way with infrastructure.

Virtualization revolutionized our ability to quickly deploy an application and scale up services when needed, paying only for the computing power used. Over the last few years, agile methodologies and continuous delivery have pushed VMs to their limits. Many teams still repeatedly use a single VM for releases and testing. Production VMs rarely change unless something goes seriously wrong. At the pace software development is moving today, VMs can still be hard to obtain, provision, and share, and thus represent a bottleneck.

But, in the modern software delivery chain, infrastructure needs to be as easy to create and share as a digital document.

This is where container technology has come in and is taking the next step. With just a few commands, you can create an image, provision it and run it as a container. Containers are more compact than VMs and can be run anywhere without being tied to a hypervisor or cloud. Containers can even run on bare metal, offering even greater efficiency than VMs.

Now that container technology is here to stay, how can you leverage its benefits and prepare your environment to support it?

Advantages of Containers

Leveraging containers moves infrastructure upstream into the hands of developers. This shift enables IT to focus on other responsibilities, like ongoing performance monitoring, while developers can focus on getting their job done without barriers. In the modern delivery chain, this means:

Limitless sandboxes:

Using their local machines or integration environments, developers can have limtless instances of the entire stack for testing against their branch of code. If something breaks, the sandbox can be thrown away and spun up again. With containers, developers can avoid integration issues even before integration even happens.

Optimized Releases:

Continuous Integration (CI) and Continuous Delivery (CD) can both be driven by containers. Containers can enable automation, making releases independent of any datacenter, cloud, or host. This can lead to more integration, unit testing, functional testing and testing periods, resulting in better application quality. Pipelines can be driven by movement of containers solely, and not just code on existing infrastructure.

Build Consistency:

It’s typically easy to identify containers, how they are configured, and even their current network and memory states. This reduces the risk of inconsistencies across the environment, such as improper configurations, patches, application versions, etc. And if something does go wrong? Fixing it is as easy as replacing one container with another.

Better DevOps:

Making it easier to develop, test and release ultimately makes it easier to slipstream DevOps best-practices into organizations. Containers simplify the release process and the onboarding of continuous integration, delivery and deployment practices. This can help even enterprises with existing business applications transform the way they build integrations, test new versions, and develop future mobile and web applications.

A New Set of Anticipated Challenges

Containers enable flexibility, but what does flexibility mean in practice? In modern development, it’s easy to let simplicity lead to sloppiness. While we focus on speedy releases, we can’t forget to build in a stable and extensible way. Thus, with the speed of containers comes a new set of challenges:

Uncontrolled Versioning

Most containers are just lightweight Linux environments. Services like Docker make it easier than ever for developers to pull a new container image from DockerHub and launch the container right from their terminal. But the same convenience of running and stopping containers could also lead to chaos. What if another developer updates the container image, causing future containers to perform differently than older containers. What if a developer restarts an old container with an outdated configuration?

While Docker provides command line tools for reviewing a list of available images with tags, image ids and created dates, this information is typically only available when proactively requested per host.

Security Risks

By default, a microservice infrastructure which breaks a monolithic application into many smaller pieces offers more available endpoints for potential security attacks. Additionally, a central repository like DockerHub can present a slew of security issues as developers pull publicly available images and launch them as containers. A survey from the firm Banyan found that over 74% of Docker images created in 2015 included high or medium priority security vulnerabilities. While container images can be patched, old containers that have been paused or stopped will remain a threat to your system if ever accidentally restarted.

Container Sprawl

Yet another challenge presented by the convenience of containers are eventually forgotten containers that use up resources. As containers are launched, stopped and launched again, it can be easy to overlook the growing number of unused containers sitting on a host. As organizations evaluate the growth rate of their instances and plan for capacity, unknowingly including rogue containers in planning can lead to wasting money and scaling faster than necessary.

Obviously, developing at a slower pace and establishing a set of rules around who makes changes, which images are used and when containers are removed can alleviate some challenges- but at what cost? The introduction of enough restrictions can eventually slow a release cycle and delay key business objectives.

Distributed Data

Finally, how should teams approach monitoring an application that has been separated into many small containers? Traditional monitoring or application performance tools can be applied to an individual container’s application, but lacks a holistic view of the entire application. As containers are frequently launched and stopped, how should CPU and memory be monitored?

An important step in successfully launching any new technology starts with identifying what can be measured and how it will be measured to ensure successful implementation. By integrating monitoring and analysis tools into each stage of your workflow, from development to deployment of your containers, you can establish a holistic view of activity and performance that reduces costs, prevents security threats, simplifies operations and alerts you to issues as they occur.

Implementing an End-to-End Logging Strategy

While it’s possible to use a third-party logging service to install logging agents within containers, there are lighter-weight alternatives that can provide more comprehensive end-to-end log management and analytics. By introducing a dedicated logging container into your environment, you can collect logs in a scalable way across your entire infrastructure without the additional overhead of multiple agents. Using a dedicated logging container, many of the challenges presented by containers can be strategically overcome.

Version control

By using a log management and analytics service, keeping image and container versions under control becomes much easier. By monitoring your team’s central Image Library (such as a locally- hosted Docker Registry) with a data visualization tool that provides sharable dashboards, teams can build shareable dashboards where the latest image versions or image tags are displayed, keeping the entire team up-to-date.

Using real-time alerts can also help with version control. Alerts can notify team members when new images are pushed to the Registry or when a container with an old tag is restarted.

Security monitoring

Security risks associated with containers become considerably more manageable when using a log management and analytics service. For example, when logging from the Docker Registry, authorization requests and tokens can be monitored while teams are alerted to auth failures. Endpoint requests that don’t match a specific syntax can trigger alerts to teams, notifying them of potential injection threats.

Logging from your application is equally as important when monitoring security. For example, when running Logentries’ Docker container on your host, application logs across every container on the host are automatically sent to the Logentries dashboard in real-time while features like Anomaly Detection constantly scan your log data for unexpected activity.

Resource management

By analyzing container logs, unused containers can be easily identified for removal from your host. Simple queries grouping containers by ID and displaying those with the minimum number of requests or memory usage in the last 30 days can provide insight into which containers can likely be eliminated.

Application & System Performance monitoring

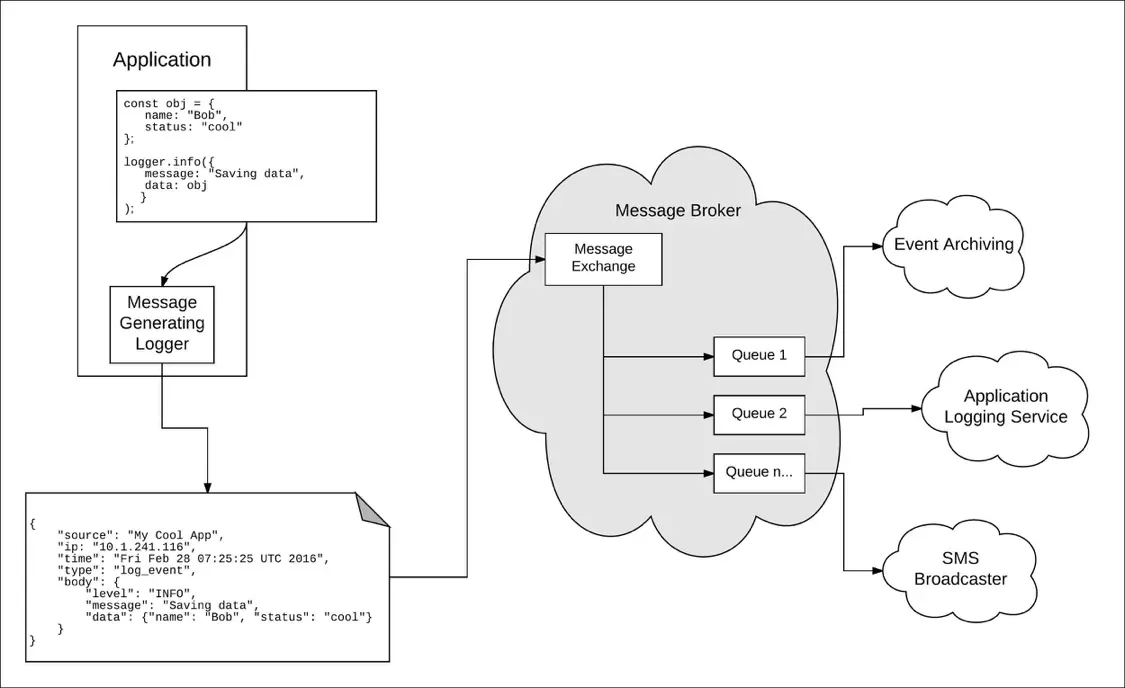

There are a variety of ways to collect application log data from containers, including:

- Sending logs to syslog

- Sending logs to standard out

- Pushing logs to and collecting them from the host

- Shipping logs using a log collector like Journald

- Running a custom logging container, dedicated to collecting and streaming log data

While best practices depend on your infrastructure and application, running a custom logging container effectively separates the logging responsibility to a dedicated container for collecting application logs and container stats. The logging container can then stream your aggregated log data to a central dashboard for further analysis, visualization and alerting.

How to Log from Docker Containers

From Docker Containers

Using Logentries’ Docker container, logging from your microservice architecture takes just a few steps and one command.

- Create a Logentries account and select “Docker” from the “Add a Log” screen.

- Git Clone Docker-Logentries repository.

git clone https://github.com/logentries/docker-logentries.git

- Go into the Docker-logentries directory.

cd docker-logentries

- Build the Docker container. This will install npm as well.

docker build -t ocker-logentries .

- Open a terminal window and run

docker run -v /var/run/docker.sock:/var/run/docker.sock logentries/

docker-logentries -t -j

Within seconds, you’ll see application log events and container stats streaming into your account.

Conclusions

Containers are already changing the way teams develop applications and are poised to revolutionize the way organizations assemble and deploy applications and services at scale. With the dramatic convenience and flexibility offered by services like Docker comes the introduction of a new set of development and operational challenges. The key to addressing these challenges head-on is implementing an end-to-end logging strategy that covers your entire infrastructure. Through the use of real-time log management and analytics services, teams can troubleshoot, analyze, visualize and be alerted to issues as they occur, providing the tools needed for successfully managing microservice infrastructures at scale.

About Logentries

The Logentries elastic logging service offers real-time log management and analytics at any scale, across any environment, making business insights from machine-generated log data easily accessible to development, IT and business operations teams of all sizes. With the broadest platform support and an open API, Logentries brings the value of log-level data to any system, to any team member, and to a community of more than 35,000 worldwide users. While traditional log management and analytics solutions require advanced technical skills to use, and are costly to set-up, Logentries provides an alternative designed for managing huge amounts of data, visualizing insights that matter, and automating in-depth analytics and reporting across its global user community. To sign up for the free Logentries service, visit logentries.com.

Article Tags

Related blog posts

Products and Tools

Taking a Message-Based Approach to Logging

Robert Reselman

Detection and Response

6 Best Practices for Effective IT Troubleshooting

Robert Reselman

Security Operations

3 Steps to Building an Effective Log Management Policy

Robert Reselman

Security Operations

3 Core Responsibilities for the Modern IT Operations Manager

Robert Reselman