It’s been little over a year since ChatGPT was released, and oh how much has changed. Advancements in Artificial Intelligence and Machine Learning have marked a transformative era, influencing virtually every facet of our lives. These innovative technologies have reshaped the landscape of natural language processing, enabling machines not only to understand but also to generate human-like text with unprecedented fluency and coherence. As society embraces these advancements, the implications of Generative AI and LLMs extend across diverse sectors, from communication and content creation to education and beyond.

With AI service revenue increasing over six fold within five years, it’s not a surprise that cloud providers are investing heavily in expanding their capabilities in this area. Users can now customize existing foundation models with their own training data for improved performance and customer experience using AWS’ newly released Bedrock, Azure OpenAI Service and GCP Vertex AI.

Ungoverned Adoption of AI/ML Creates Security Risks

With the market projected to be worth over $1.8 trillion by 2030, AI/ML continues to play a crucial role in threat detection and analysis, anomaly and intrusion detection, behavioral analytics, and incident response. It’s estimated that half of organizations are already leveraging this technology. In contrast, only 10% have a formal policy in place regulating its use.

Ungoverned adoption therefore poses significant security risks. A lack of oversight through Shadow AI can lead to privacy breaches, non-compliance with regulations, and biased model outcomes, fostering unfair or discriminatory results. Inadequate testing may expose AI models to adversarial attacks, and the absence of proper monitoring can result in model drift, impacting performance over time. Increasingly prevalent, security incidents stemming from ungoverned AI adoption can damage an organization's reputation, eroding customer trust.

Safely Developing AI/ML In the Cloud Requires Visibility and Effective Guardrails

To address these concerns, organizations should establish robust governance frameworks, encompassing data protection, bias mitigation, security assessments, and ongoing compliance monitoring to ensure responsible and secure AI/ML implementation. Knowing what’s present in your environment is step 1, and we all know how hard that can be.

InsightCloudSec has introduced a specialized inventory page designed exclusively for the effective management of your AI/ML assets. Encompassing a diverse array of services, spanning from content moderation and translation to model customization, our platform now includes support for Generative AI across AWS, GCP, and Azure.

Once you’ve got visibility into what AI/ML projects you have running in your cloud environment, the next step is to establish and set up mechanisms to continuously enforce some guardrails and policies to ensure development is happening in a secure manner.

Introducing Rapid7’s AI/ML Security Best Practices Compliance Pack

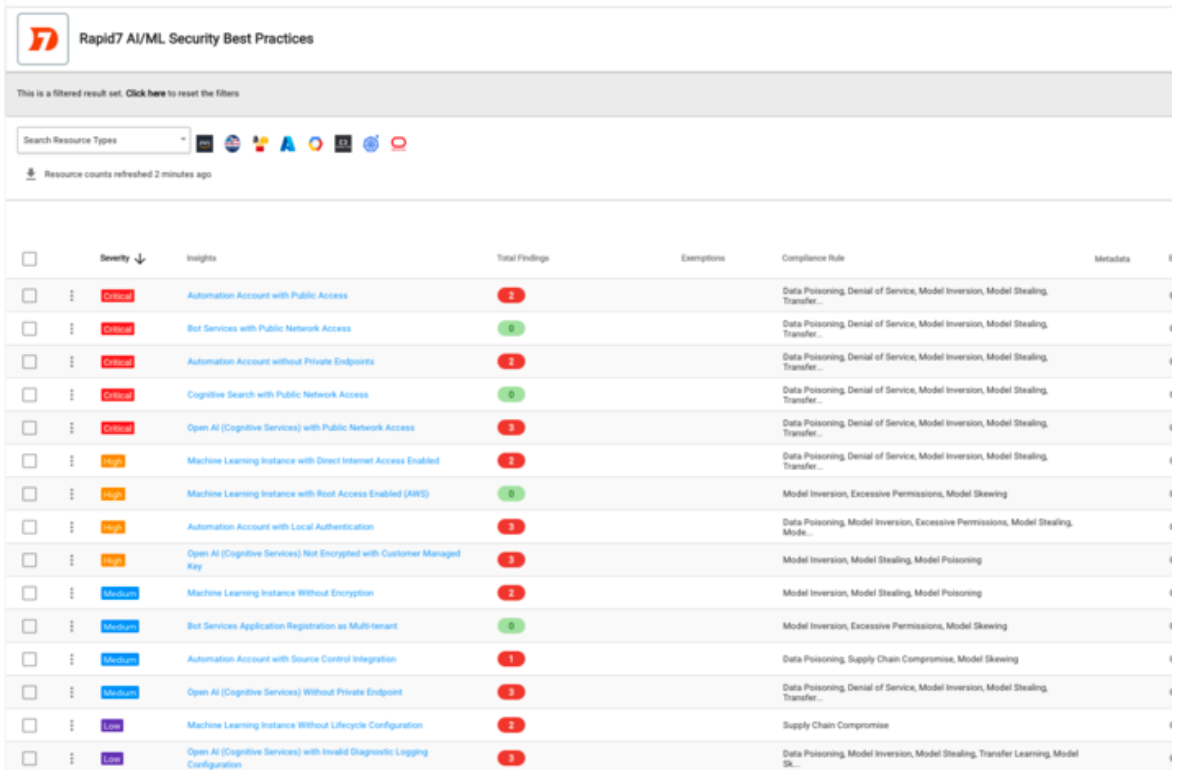

We’re excited to unveil our newest compliance pack within InsightCloudSec: Rapid7 AI/ML Security Best Practices. The new pack is derived from the OWASP Top 10 Vulnerabilities for Machine Learning, the OWASP Top 10 for LLMs, and additional CSP-specific recommendations. With this pack, you can check alignment with each of these controls in one place, enabling a holistic view of your compliance landscape and facilitating better strategic planning and decision-making. Automated alerting and remediation can also be set up as drift detection and prevention mechanisms.

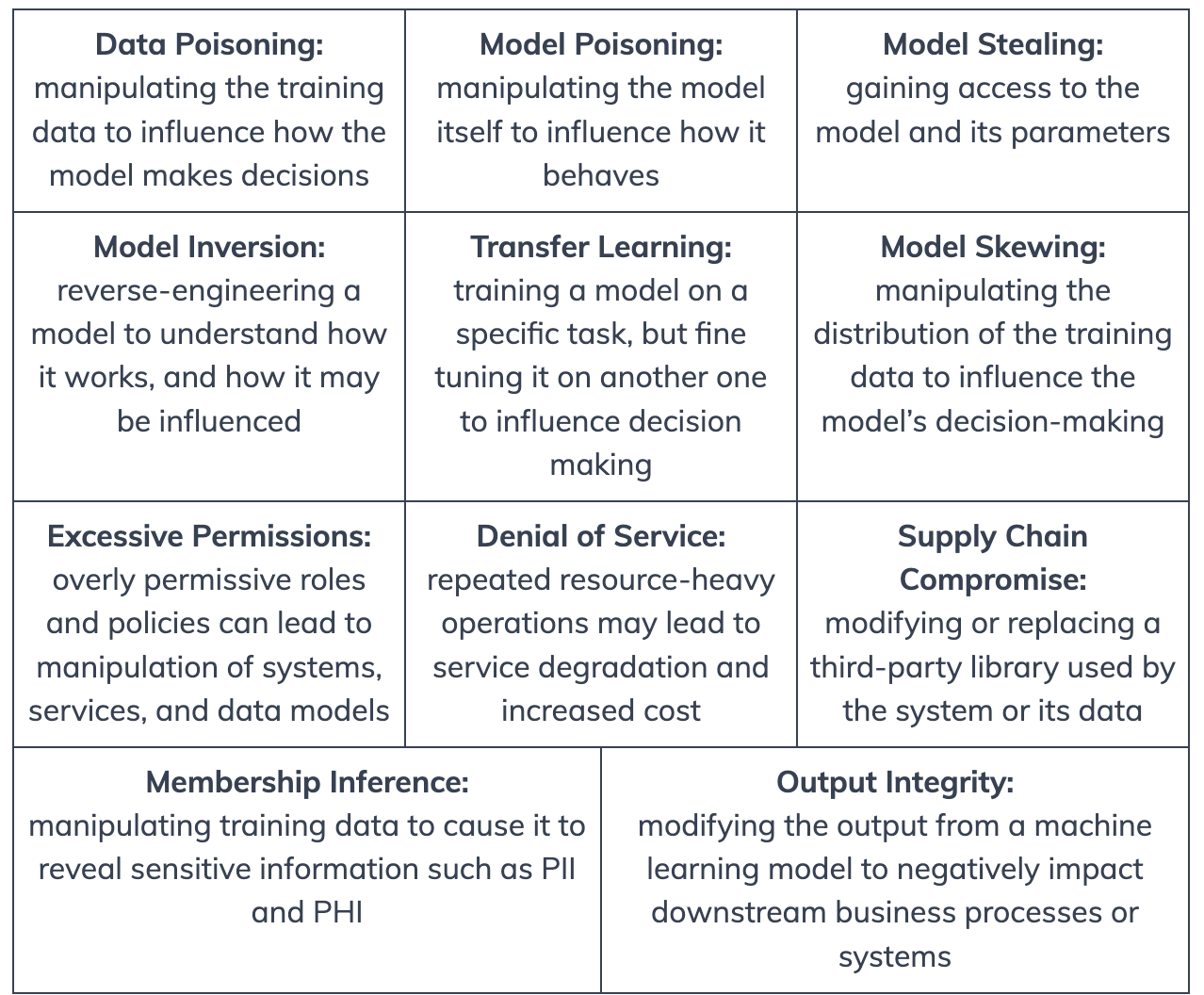

This pack introduces 11 controls, centered around data and model security:

The Rapid7 AI/ML Security Best Practices compliance pack currently includes 15 checks across six different AI/ML services and three platforms, with additional coverage for Amazon Bedrock coming in our first January release.

For more information on our other compliance packs, and leveraging automation to enforce these controls, check out our docs page.

Related blog posts

Artificial Intelligence

Rapid7 and OpenAI: Helping Defenders Move at Machine Speed

Wade Woolwine

Industry Trends

3 Reasons to Attend our Global Cybersecurity Summit if you’re Focused on AI, Threats, and CTEM

Emma Burdett

Exposure Management

From Bulk Export to AI-ready Security Workflows: Introducing Rapid7’s Open-Source MCP Server and Agent Skill

Michael Chroney, Ed Montgomery

Artificial Intelligence

Project Glasswing and the Next Challenge for Defenders: Turning Faster Discovery into Faster Action

Craig Adams