This week, an anonymous researcher published the results of an "Internet Census" - an internet-wide scan conducted using 420,000 insecure devices connected to the public internet and yielding data on used IP space, ports, device types, services and more. After scanning parts of the internet, the researcher found thousands of insecurely configured devices using insecure / default passwords on services and used this fact to make those devices into scanning nodes for his project. He logged into the devices remotely, uploaded code to assist in the scans that also included another control mechanism and executed it.

The Internet Census 2012 - as the author calls it - was published together with all its data at Internet Census 2012.

The Approach

It is interesting research, not only because of the data and findings - but also due to the techniques used to accomplish it. This is one of the few situations where a botnet is formed with good intentions and precautions to not interfere with any normal operation of the devices being used. The research both raises awareness about the vast availability of insecure devices on the public internet and at the same time provides the data for researchers for free. Despite these good intentions, this approach is still illegal in most countries and certainly unethical as far as the security community is concerned.

Using insecure configurations and default passwords to get access to remote devices without permission of their owners is illegal and unethical. Going further, pushing and running code on these devices is even worse. The "whitehat nature" of the effort does not justify the means.

As far as my personal opinion goes, I respect the technical aspects of the project and think it serves a good purpose for security awareness and as a data set for further research. On the other hand, I would never have done it in this way myself because of the illegality and ethical concerns around it, and I hope that it does not lead to too many malicious and borderline activities in the future.

Other Similar Efforts

There have been, and still are, ongoing efforts that do internet-wide surveys through legal means. These are often a little bit smaller in scale due to available resources and the associated costs with thorough scans. An example of this is the Critical Research: Internet Security Survey by HD Moore, which takes a different approach. "Critical.io," as it's known, does covers a much smaller numbers of ports (18 vs 700 ), but has been continuously scanning the internet for months, gathering trend data in addition to a per-service snapshot. In the past six months, this project has revealed a number of security issues, including that over 50 million network-enabled devices at risk through the UPnP protocol, thousands of organizations at risk through misconfiguration of cloud storage, and helped identify surveillance malware being used by governments around the world.

There have been other efforts that actually gave the name to the debatable "Internet Census 2012" project:

This project was limited in it's depth as it only focuses on machines that reply to ICMP ping requests. Critical IO, Shodan, and the 2012 Census take this to another level.

The Data

As an example of how the data looks, the following output is a part of the file serviceprobes/80-TCP_GetRequest/80-TCP_GetRequest-1 in the dataset:

1.9.6.70

1355598900

5 1.9.6.75

1355618700

5 1.9.6.83

1343240100

3 1.9.6.98

1343231100

1 HTTP/1.1=20200=20OK=0D=0ADate:=20Thu,=2026=20Jul=202012=2007:05:09=20GMT=0D=0ALast-Modified:=20Tue,=2007=20Nov=202006=2003:41:23=20GMT=0D= [...]

The format of the serviceprobes files is always comprised of four columns: IP address, timestamp, status code and response data (if any). If you are not interested in closed ports / timeouts / connections without responses, I recommend to filter and clean the data to only include the "status code 1" lines that actually include a response. Also the payload is stored in "quoted-printable" format which you should decode before doing any searches for plaintext and similar analysis.

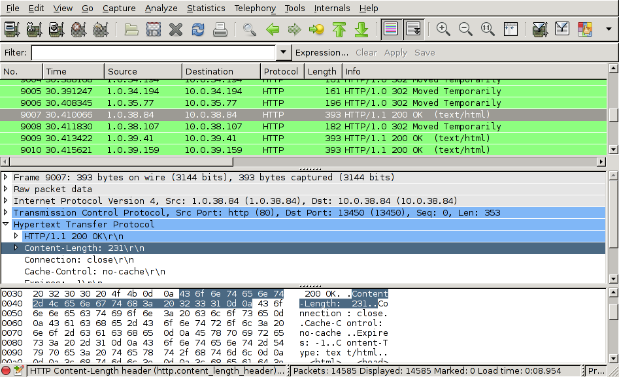

For my initial tests I put together a script that filters the data coming from the unpacker to ignore status != 1 and also decode the payload. When not using the quoted printable version, one can not store it in line-based form anymore as the payload also might contain newlines. I decided to quickly write out PCAP files with a crafted IP/TCP header as there are quite good libraries and tools out there to process data from PCAPs. You can find my small script here if you're interested. Using PCAP is certainly not optimal - using some database file format or other framing would be better.

Running convert_census_probes in conjunction with the ZPAQ reference decoder:

unzpaq200 80-TCP_GetRequest-1.zpaq | python convert_census_probes.py 80 80-TCP_GetRequest-1-open.pcapThis leaves us with a wireshark-readable PCAP with the decoded payloads:

There still is more room for playing around with the dataset - putting together better processing scripts, maybe even coming up with a way to put the data into a database for improved analytical possibilities.

Next Steps

I'm going to be digging into the data more, and I expect we'll see a lot of folks in the security industry digesting and commenting on the findings in coming weeks.

I'd like to see more projects of this kind, conducted legally, and sharing information about the real state of play on the internet. Ultimately we need to raise awareness about the sad state of security across almost all our devices and systems. We have reached 2013 with our security and access protection far away from where they should be. Increased awareness, updated vendor priorities and more secure development are needed desperately.

These projects remind us that we should all employ monitoring tools and vulnerability management in order to identify flaws in our systems before the bad guys do.