I've been at the technical helm for dozens of demonstrations and evaluations of our incident detection and investigation solution, InsightIDR, and I've been running into the same conversation time and time again: SIEMs aren't working for incident detection and response. At least, they aren't working without investing a lot of time, effort, and resources to configure, tune, and maintain a SIEM deployment. Most organizations don't have the recommended three to five dedicated SIEM caretakers, and the result is the system produces a high volume of false positives that are difficult to investigate. Why is this happening, and what makes it so hard?

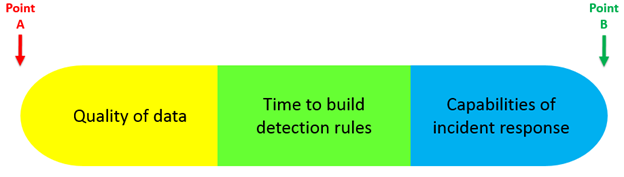

Let's visualize the incident detection and response process from start to finish: raw log ingestion to incident containment (Points A & B below). Between Point A and Point B, we need to correlate the logs, produce meaningful alerts, and act on those alerts. This gives us three interdependent components as we move from Point A (ingesting logs) to Point B (containing an incident):

- The yellow segment represents the data flowing into the system and how the system correlates it.

- The green segment represents the system's ability to detect malicious behavior.

- The blue section represents the admin's ability to react to alerts and contain incidents.

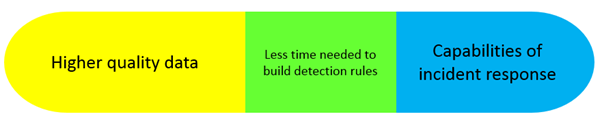

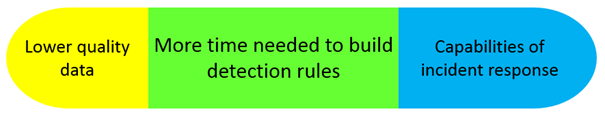

The idea is, the higher quality data the system has to work with, the farther right the yellow bar expands. On the other end of the spectrum, the better the capabilities of incident response are, the further left the blue section extends. The ultimate goal is to eliminate as much of the green section as possible, reducing the amount of time spent on building and maintaining rules.

Let's take a look at how SIEMs are tackling the challenge:

Quality of Data

The length of the yellow segment corresponds to the quality of data, meaning how well the system is able to ingest and correlate logs from various sources without human help.

Correlation is the key here - stronger correlation between various pieces of log data (IPs, assets, users, accounts, etc.) makes it much easier for the system and the admin to understand how these different log events interrelate. Strong correlation results in less work on the administrator's part for building rules because the system is automatically correlating data and the admin is just responsible for defining alerts based on anomalous or malicious behavior. On the other hand, if data is arriving in the system in raw log format with no pre-built correlation, then the admin will need to define correlation rules before he or she can build detection rules; without correlation, alerts aren't contextual and end up producing a deafening amount of noise. To illustrate:

Another major factor here is coverage – most SIEM solutions can handle internal logs, but enterprise cloud solutions (eg, AWS, Office365, Okta, etc.) are increasingly common, enabling employees to work from home more easily. These remote users and cloud networks are ignored by most SIEM solutions. Additionally, very few organizations that I've spoken to are gathering data from all their endpoints – this makes it impossible to detect more sophisticated attacker techniques such as lateral movement, event log deletion, and local privilege escalation.

Time to Build Detection Rules

The green segment's job is basically to bridge the gap between the data in the system and the capabilities of the incident response team. Ideally, this section is nonexistent, meaning the admin doesn't need to spend any time building or maintaining rules. He or she spends all their time responding to and containing incidents. Realistically though, every organization will, at the very least, have some unique rules to build based on internal policies, network architecture, and what they consider sensitive data. Even so, if we're able to provide some detection rules without configuration, and leave only a handful of organization-specific rules to be configured, we've successfully cut down the green section.

Unfortunately, extensive manual configuration is unavoidable with SIEM solutions – every log source, every correlation rule, every dashboard, and every alert has to be setup by the admin. Per Gartner, this results in an average setup time of six months just to get basic use cases and workflows configured, with a full deployment taking upwards of three years. Oh, and these statistics are assuming you have 3-5 individuals dedicated to deploying, maintaining, and tuning the SIEM – full time. Not many security teams that I talk to have the resources to dedicate even a single person to their SIEM, let alone five, which leaves us here:

Capabilities of Incident Response

The last piece of this equation are the capabilities of the incident response team. Like the quality of data segment, we actually want this portion to be as extensive as possible – the larger the blue section is, the better poised the IR team is to quickly contain incidents. There are a lot of variables here, but the key factors are security tools and human resources. As most organizations have a fairly static headcount, our goal here is to provide useful tools to help facilitate incident response. Good tools make it easier for an incident responder to understand the what, where, and who of a security incident - what happened, where in the network did it occur, and who was involved. This gives the incident response team the information they need to swiftly and successfully contain the incident.

The detection rules previously created produce an alert – the more finely tuned and contextual the alert is, the easier it is for the incident responders to identify the users and/or assets that have been compromised. The incident responders typically use log search tools to find other affected users/assets, and only once all compromised targets have been identified can the IR team move on to containment. Once identified, the IR team contains the incident by resetting passwords/pulling assets offline. Finally, the team can analyze the attack to find the root cause and remediate appropriately.

What's the bottom line?

There are two key metrics SIEM systems are supposed to address: time to detect, and time to contain. According to the Verizon Data Breach Investigations Report (DBIR), time to detect a breach is still measured in months. Time to contain a breach once it has been detected is still measured in days or weeks, and almost a quarter of breaches still take months to contain. This is largely due to the fact that traditional SIEM technologies require admins to build, maintain, analyze, and respond to alerts. If the past 10 years of SIEM technologies have taught us anything, it's that the problems are still there, and we need a new approach.

How does InsightIDR help?

- InsightIDR provides an out-of-the-box analytics and correlation engine to ingest logs from about 70 different security and IT solutions, correlate the data, and provide the context needed to produce meaningful alerts.

- Over 60 pre-built, contextual alerts give admins an advanced system for incident detection without the overhead required to build, maintain and tune alerting rules.

- The investigations feature gives incident responders an immediate understanding of the what, where, and who involved in an incident, along with the ability to run deeper forensic interrogations and correlate data from all log sources to further investigate an incident.

Related blog posts

Vulnerabilities and Exploits

ClickFix Phishing Campaign Masquerading as a Claude Installer

Nicholas Spagnola

Vulnerabilities and Exploits

FortiGate CVE-2025-59718 Exploitation: Incident Response Findings

Eric Carey, Olivia Henderson +1

Products and Tools

Identifying and Mitigating Potential Velociraptor Abuse

Christiaan Beek

Detection and Response

Rapid7 Q2 2025 Incident Response Findings

Chris Boyd