A Content Security Policy is a protocol that allows a site owner to control what resources are loaded on a web page by the browser, and how those resources may be loaded. This protocol was developed primarily to mitigate the impact of cross-site scripting (XSS) vulnerabilities. To understand exactly what this means, we need to dive into how modern websites and web applications work.

Most modern web apps consist of both static and dynamic content. An example of static content might be the copy for an “About” page that is hard-coded into HTML, or more likely, the general HTML structure of a navigation bar on a website. Dynamic content, on the other hand, is the portion of the site that is conditionally generated, typically from user-provided input. In a chat application, a username is dynamic content, as well as the text that encompasses a message. The user generates some input, then that input is dynamically inserted into the HTML document. Unfortunately, the browser has no idea whether a certain block of code or text was generated statically or dynamically. This is what opens the path for XSS attacks.

In a successful XSS attack, a user is able to supply input to an application that tricks the browser into executing code of the attacker’s choice. For example, if an application allows you to supply a username that is then rendered somewhere on the page, an attacker could supply a username of “<script>window.alert(“bazinga!”);</script>”. When the browser takes that string and places it in the page’s source code, or Document Object Model (DOM), the browser interprets it as a valid JavaScript block, and executes it. This is an example of an XSS attack caused by inline script injection, but there are many different ways of injecting and executing JavaScript.

Another great example of content injection (although not technically XSS) is in 2014, Comcast came under fire for injecting advertisements into unencrypted browsing sessions of users connected to their free Wi-Fi hotspots. In this case, Comcast was intending to offset the cost of free internet hotspots by performing a man-in-the-middle attack where extra JavaScript was added to the content of a web page that caused advertisements to pop up. These ads were not approved by the website owners, and it raised serious security concerns about the ability to inject third-party content into browsing sessions. It should be noted that this particular vector for content injection was not possible for connections that were encrypted and is part of the reason I recommend that every website should require HTTPS, but that’s a conversation for another day.

So, as it turns out, there are many things that can influence what ends up being rendered in a user’s browser. There are several precautions you can take for things like validating user input and sanitizing data before rendering it, but that only gets you so far. Developers aren’t perfect, and they should assume that a malicious user will eventually find a way to bypass their protections and render arbitrary content. That’s where the Content Security Policy comes into play.

A Content Security Policy (CSP) is a series of commands that informs the browser of all the places the web app author anticipates content to be. Essentially, it acts as an allowlist of safe content for the DOM. While restricting inline JavaScript is probably the most important aspect of a CSP, it can also specify many other types of content restrictions, including sources of CSS, valid iFrame sources, valid types of plugins that should be supported or not (such as Flash), and approved websocket destinations. A CSP does not prevent XSS in that it does nothing to stop malicious input from being rendered in the DOM, but what it does do is mitigate the impact of an XSS attack by instructing the browser to not execute scripts that are not explicitly allowed.

What can a CSP do?

A Content Security Policy mostly restricts sources and types of content and where in the page that content can be presented, or rendered. In terms of preventing XSS, “inline JavaScript” tends to be the primary vector for malicious content. Inline JavaScript is when code is placed in a “<script>” tag in the body of the HTML document. Additionally, preventing inline JavaScript will also stop code from executing on event listeners such as “onClick” or “onHover” attributes which are a common vector for XSS. Generally speaking, it is bad practice to use inline JavaScript at all, so using a CSP to prevent inline JavaScript from executing is not only a great security feature, it enforces good code hygiene.

In a similar vein, a CSP can prevent code from executing in a JavaScript “eval()” function call, which is another common source of XSS vulnerabilities. It should be noted that many popular graphing libraries use the “eval()” function despite being poor practice. This makes it nearly impossible for some projects to enforce this policy.

A CSP also can restrict where a script can be loaded from. For example, adding the ‘self’ verb to your ‘script-src’ directive will only allow scripts to be loaded that are hosted on the same domain the site is hosted on, but would prevent scripts from being loaded from any other domain (Google Analytics being a great example).

However, this functionality is not limited to scripts. It can also limit permissions for styles, form actions, fonts, iFrame locations, or even hyperlink destinations. The policies are actually quite verbose. You could fill an entire training course with the various CSP directives and all the options, but for the sake of this blog, I’ll leave exploring all of these directives as an exercise for the reader, and just address individual directives as needed.

CSP violation enforcement

It’s important to note that enforcement of CSP violations is the responsibility of the browser. As such, it means that the actual details of implementation can vary from browser to browser, and support for various CSP directives can vary as well. At the time of writing, CSP version 3 is in draft form, but has pretty universal support from all modern browsers.

CSPs have two primary enforcement modes: the standard mode (which enforces, or blocks any potential violations) and Report Only mode, which does not block violating conditions, but simply reports them. This brings us to a pretty interesting directive, the “report-to” directive. This directive accepts a full URI to which data about all violations will be sent via HTTP POST. An example of a report is listed below.

{ "csp-report": { "document-uri": "http://example.com/signup.html", "referrer": "", "blocked-uri": "http://example.com/css/style.css", "violated-directive": "style-src cdn.example.com", "original-policy": "default-src 'none'; style-src cdn.example.com; report-uri /_/csp-reports", "disposition": "report" } }

It is typically recommended that when getting started with using CSPs, you should begin by using Report Only mode, and eventually migrate to Enforce when you are confident that you haven’t broken your site for valid users.

Implementation

There are a couple notable implementation methods for CSPs. The primary mechanism is to pass an HTTP header named “Content-Security-Policy” (or “Content-Security-Policy-Report-Only” to disable enforcement). The second option is to use a little-known variant of the “” HTML tag in the head section of the HTML document. To implement a CSP this way, you could use a tag like this: <meta http-equiv=”Content-Security-Policy” content=”<csp policy>” />

This second option is useful if you don’t necessarily have control of the server headers but have complete control of the content.

CSP usage on Alexa Top 1M

I used Project Sonar to run a survey of CSPs to attempt to identify the market penetration of this security feature, common configuration options, and any other interesting or unexpected common configurations. Before diving into the results, I first want to talk about a couple limitations to the data.

There are some good reasons you might choose to not prioritize creating a Content Security Policy on your web property. You get the most bang for your buck in creating a Content Security Policy when the site you are protecting has a lot of dynamic content. Sites like Facebook, Twitter, and GitHub are composed almost entirely of user-generated content. Web forums, web portals for controlling devices, and content management systems are also the types of sites with dynamic content that would benefit the most from having a solid Content Security Policy. When analyzing the Alexa Top 1M domains, it’s important to note that many of these sites serve primarily static content. Sites that act as landing pages for large corporations or represent products or brands likely are not serving a significant amount of dynamic content. Additionally, the ones that do serve a lot of dynamic content still often only serve a more static landing page at the root of the domain, only serving the more dynamic content at another path. Our data only represents the root resource of all sites in the Alexa Top 1M, which likely underrepresents the presence of Content Security Policies relative to the sites that would actually benefit from having one.

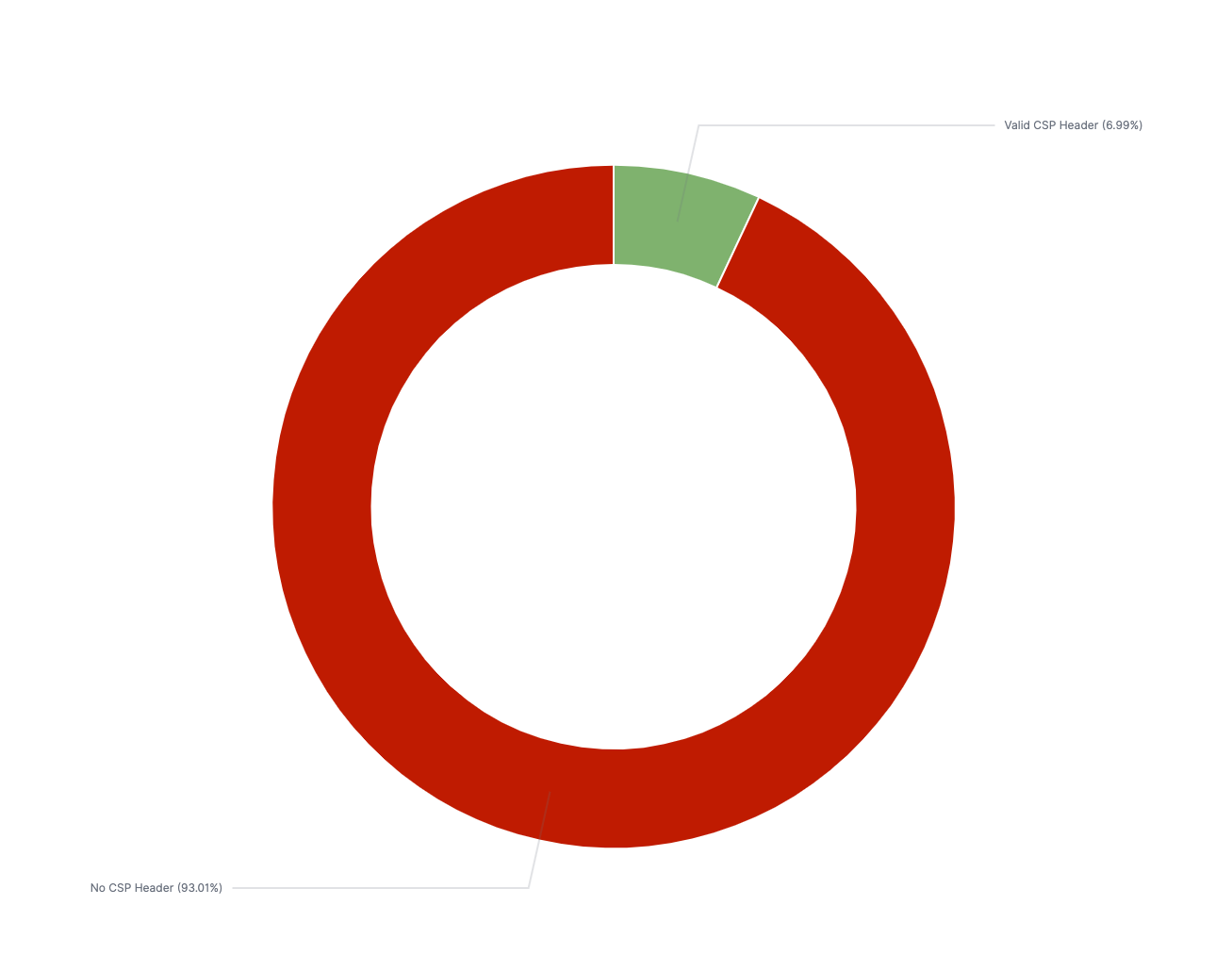

Prevalence of valid CSPs

About 7% of all sites on the Alexa Top 1 Million have a valid CSP of some kind (that means either an enforcing or a report-only policy). Of the sites with a valid CSP, just over 3% of them implement their policy with a meta tag in the HTML message.

It’s also important here to call out the number of sites that have invalid CSPs. While the number isn’t high, 252 of the Alexa Top 1M are using a deprecated header (X-Content-Security-Policy or X-Webkit-CSP) without also including a valid header. There are even more instances of deprecated headers existing alongside valid CSP policies (786 in total), which is a configuration known to cause inconsistent behaviors across different browsers. Intuitively, you would think that supporting old headers would provide backward compatibility, but in this instance, all occurrences of these old headers are detrimental.

Common directives

All CSP directives are optional, and the spectrum of capabilities supported by different directives is broad. To understand how CSPs are being used in the wild and what features are being adopted most broadly, we split out the directives being used.

The most common document directive is the default-src directive. This is intuitively obvious as it handles the default case for the other directives, so it’s typically implemented alongside the other directives. The next most common directive is the ‘script-src’ directive. Again, this is a logical conclusion, as the primary benefit of a CSP is mitigating XSS attacks by disallowing inline JavaScript execution. The next most common directives are img-src and style-src, which is almost certainly due to the frequency of loading images and styles as external resources, and the ease of implementation (as both types of content tend to be served out of static directories).

Some directives are flagged as ‘experimental,’ meaning they are not fully approved into the RFC and have limited or inconsistent support across browsers. The most commonly used experimental directive is the worker-src directive, which controls where Worker, SharedWorker, or ServiceWorker scripts can be loaded from. These features allow background tasks to run in the browser and are fairly advanced features. ServiceWorkers are a feature of Progressive Web Applications (PWA), which are installable web applications that have many of the features of native applications. It’s likely due to the increase in the number of sites that are adopting a PWA architecture that we see this particular security feature gaining traction (however, this hypothesis should be confirmed).

Identical policies

One very interesting finding was the presence of a large number of policies that were completely identical. The policy “block-all-mixed-content; frame-ancestors 'none'; upgrade-insecure-requests;” returns just over 15,000 results. When looking at the domains that apply this policy, it’s immediately clear that this policy is applied to hosted instances of the Shopify e-commerce site. While this was the only SaaS solution that was easily identifiable in our study, it’s likely that many software products that allow you to instantiate a web service have (or will eventually have) content security policies applied to them.

Allowed sources

One hypothesis I held before conducting this study was that many sites would allow content hosted on a domain on the Public Suffix List, implying that it would be trivial to bypass the protection by obtaining a subdomain and hosting your malicious content there. I was pleasantly surprised to find that this was not the case.

Most of the third-party sources explicitly approved in CSPs are tied to Google (Analytics, Tag Manager, Fonts), Facebook, or Twitter, with the overwhelming majority allowing Google Analytics domains. These are pretty safe exceptions and generally show that organizations that take the time to implement these directives generally do so.

Conclusions

A good Content Security Policy doesn’t prevent your web app from being vulnerable to XSS, but it does prevent many of those vulnerabilities from being exploitable. Adoption across the Alexa Top 1M has been meager, but that is likely due to the disproportionate ROI for sites with more dynamic content. The web development community would benefit from having new tools, guidance, and frameworks for developing meaningful Content Security Policies.

Related blog posts

Products and Tools

Rapid7 completes IRAP PROTECTED assessment for Insight Platform solutions

Rapid7

Exposure Management

Enforce and Report on PCI DSS v4 Compliance with Rapid7

Lara Sunday

Products and Tools

InsightAppSec: Improving Scan Speed and Performance

Shane Queeney

Products and Tools

Application Security Posture Management

Rapid7