Last updated at Fri, 22 Dec 2023 14:56:06 GMT

Part 3: Red and Purple Teaming

This is the third and final installment in our 2021 series around attack surface analysis. In part 1 I offered a description and the value and challenge of vulnerability assessment. Part 2 explored the why and how of conducting penetration testing and gave some tips on what to look for when planning an engagement. In this installment I’ll detail the final 2 analysis techniques—red and purple teaming.

Previously, we rather generically defined a red team engagement as a capabilities assessment. Time to get a little more specific with our terminology with a better definition, once again courtesy of NIST:

“A [red team is a] group of people authorized and organized to emulate a potential adversary’s attack or exploitation capabilities against an enterprise’s security posture. The Red Team’s objective is to improve enterprise cybersecurity by demonstrating the impacts of successful attacks and by demonstrating what works for the defenders (i.e., the Blue Team) in an operational environment.”

(Source: https://csrc.nist.gov/glossary/term/Red_Team)

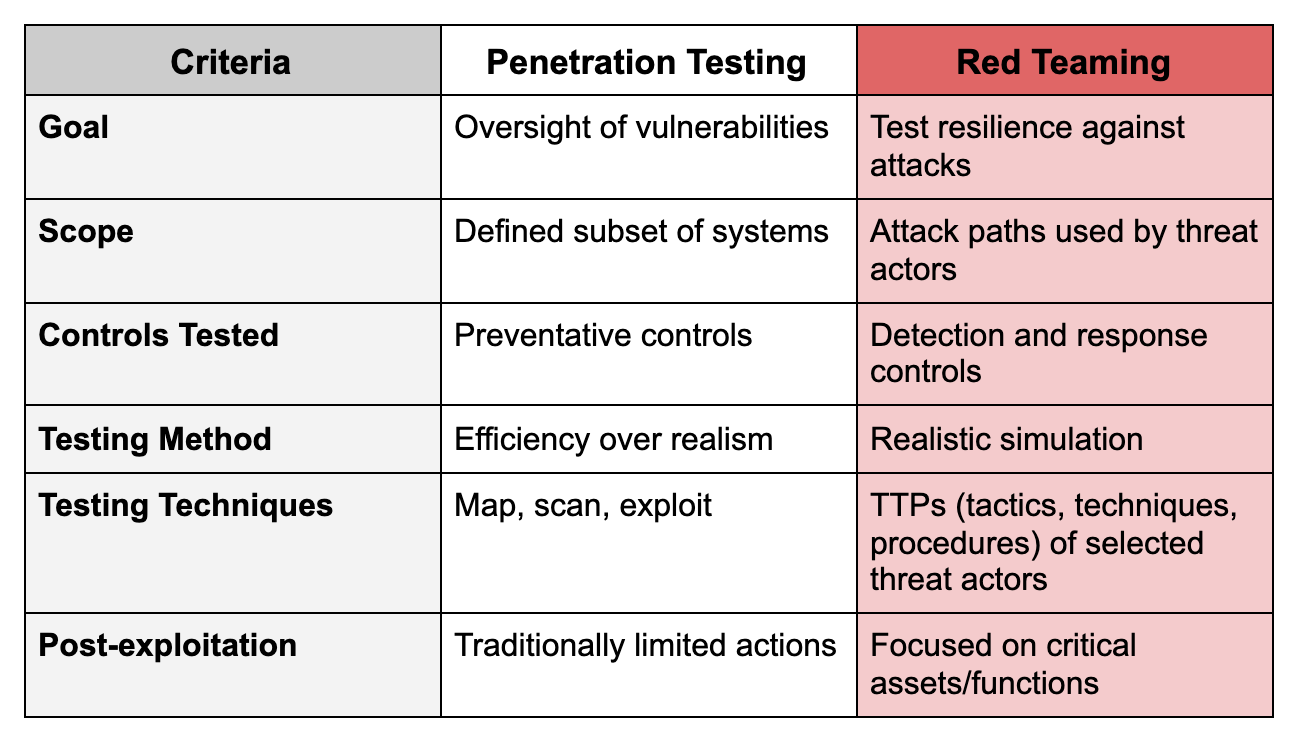

If you’re scratching your head about now thinking “well, that sounds awful similar to a pentest,” I’ve put together the following table to help really illustrate the differences:

Additionally, like the various methodologies available for pentesting, red teams have different options in how they perform their engagements. The most common methodology that many of you have no doubt heard of is the MITRE ATT&CK framework, but there are others out there. Each of the options below has a different focus, whether it be red teaming for financial services or threat intel-based red teaming, so there is a flavor available to meet your needs:

- TIBER-EU—Threat Intelligence-Based Ethical Red Teaming Framework

- CBEST—Framework originating in the UK

- iCAST—Intelligence-Led Cyber Attack Simulation Testing

- FEER—Financial Entities Ethical Red Teaming

- AASE—Adversarial Attack Simulation Exercises

- NATO—CCDCOE red team framework

You may be thinking, “There’s no way I can stand up an internal red team, and I don’t have the budget for a professional engagement, but I would really like to test my blue team. How can I do this on my own!?” Well, you don’t have to! There are plenty of open source tools available to help you take that first step. While the following tools are nowhere near as capable or extensive as a human-led team, they do give a number of useful insights into potential weaknesses in your detection and response capabilities:

- APTSimulator—Batch script for Windows that makes it look as if a system were compromised

- Atomic Red Team—Detection tests mapped to the MITRE ATT&CK framework

- AutoTTP—Automated Tactics, Techniques & Procedures

- Blue Team Training Toolkit (BT3)—Software for defensive security training

- Caldera—Automated adversary emulation system by MITRE that performs post-compromise adversarial behavior within Windows networks

- DumpsterFire—Cross-platform tool for building repeatable, time-delayed, distributed security events

- Metta—Information security preparedness tool

- Network Flight Simulator—Utility used to generate malicious network traffic and help teams to evaluate network-based controls and overall visibility

- Red Team Automation (RTA)—Framework of scripts designed to allow blue teams to test their capabilities, modeled after MITRE ATT&CK

- RedHunt-OS—Virtual machine loaded with a number of tools designed for adversary emulation and threat hunting

Lastly, before we head into a description of purple teaming, I want to reiterate what we’ve discussed this far. The goal of a red team engagement is not just discovering gaps in the detection and response capabilities of an organization. The purpose is to discover the blue team’s weaknesses in terms of processes, coordination, communication, etc., with the list of detection gaps being a byproduct of the engagement itself.

Purple Teaming

While the name may give away the upcoming discussion (red team + blue team = purple team), the purpose of the purple team is to enhance information sharing between both teams, not to replace or combine either team into a new entity.

- Red Team = Tests an organization’s defensive processes, coordination, etc.

- Blue Team = Understands attacker TTPs and designs defenses accordingly

- Purple Team = Ensures both teams are cooperating

- Red teams should share TTPs with the blue team

- Blue teams should share knowledge of defensive actions with the red team

Realistically, if both of your teams are already doing this, then congratulations! You have a functional purple team. However, if you’re like me and are a fan of more form and structure, check out the illustration below:

(Source: https://github.com/DefensiveOrigins/AtomicPurpleTeam)

Seems pretty simple right? In theory it is, but in practice it gets a little more difficult (though probably not in the way you’re thinking). The biggest hurdle to effective purple teaming is helping the blue and red teams overcome the competitiveness that exists between them. Team Blue doesn’t want to give away how they catch bad guys, and Team Red doesn’t want to give away the secrets of the dark arts. By breaking down those walls you can show Blue they’re better defenders by understanding how Red operates, and Red that they can enhance their effectiveness by expanding their knowledge of defensive operations in partnership with Blue. In this way, the teams will actually want to work together (and dogs and cats will start living together, MASS HYSTERIA).

I hope the information above is helpful as you determine which analysis strategy makes sense for you! Check out the other posts in this series for more information on additional analysis techniques to take your program to the next level: